Table of Contents

Distributed Evolution of Swarms

This page is a short summary of my diploma thesis (equivalent to master's thesis). Unfortunately, there is only a german version available.

- Diploma Thesis, 8.5MB PDF, 141 pages

The diploma thesis downloadable on this page is not exactly the one submitted during the examination (for example, I left away personal acknowledgements and statement of authorship, and tweaked the typography).

What's all about?

Put in a short way: I not only wanted to simulate swarm behavior – I wanted to write a large evolution so that enables swarmbehavior to emerge automatically. One can consider it as a nice, colorful, scientifically affected tech demo.

So, throughout the thesis, a generic software infrastructure was developed for performing experiments in swarm evolution. It enables to not just evolve swarm behavior, but also to distribute the effort among computer clusters for large evolution experiments. Because I bullheadedly wanted to do everything myself, I implemented my own small network protocoll to accomplish this.

Different to related work, in my experimental setup, the evolution of behaviors was much less narrowed down by common constraints. For instance, parameters of sensors and acting elements were evolved, extensive simulations were performed following realistig physical laws, the swarmer controllers were growing, recurrent, evolvable neural nets, and much more.

Different to related work, in my experimental setup, the evolution of behaviors was much less narrowed down by common constraints. For instance, parameters of sensors and acting elements were evolved, extensive simulations were performed following realistig physical laws, the swarmer controllers were growing, recurrent, evolvable neural nets, and much more.

The software written for the diploma thesis roughly includes three parts (all of which I wrote in JAVA), which I introduce in a short way.

- A generalized evolution software including efficient distribution of calculating efforts on lots of computers,

- a swarm simulation engine that builts upon a physics framework, and

- a topology evolvable neural network.

Evolution Framework

The evolution framework was written in a completely generalized way – one could use it for evolving solutions with respect to any evolvable problem. Evolving whole bio inspired swarms is just one possible usage scenario.

The evolution framework was written in a completely generalized way – one could use it for evolving solutions with respect to any evolvable problem. Evolving whole bio inspired swarms is just one possible usage scenario.

- The framework included an intuitive GUI (see figure on the right).

- Lots of evolution strategies and parameters have could be chosen and altered during run time.

- The framework was able to distribute the calculation effort of evaluations, mutations and crossovers on local cores.

Cluster Evolution

For larger scale evolutions like the ones in this thesis I wrote a distribution protocol based on TCP with separate GUI (see figure on the right). There was one evolution server, and many clients.

For larger scale evolutions like the ones in this thesis I wrote a distribution protocol based on TCP with separate GUI (see figure on the right). There was one evolution server, and many clients.

- Fully automated update and deploy: Clients were able to update theirselves by retrieving protocol updates from the evolution server. This kept me from having to visit all of them when altering the protocol / adding features etc.

- Client metering: Clients benchmark theirselves, connect to the server and then download the JAVA classes necessary for the problem to evolve solutions for (as the client is implemented in a generalized way as well, initially it cannot know what exactly is to evolve).

- The server distributes the java classes of the problem to evolve among the clients and also dispatches „chunks“ of „calculation jobs“ to clients. Such chunks may contain mutation, crossover or evaluation jobs.

- For any of these job kinds, the average calculation time is continually maintained, adjusted by the speed of the respective client.

- Dependent on these averaged calculation times, client speeds, client bandwidth, and network latency, the server determines, how many jobs are put in a „chunk“, which is then dispatched to a client. One example: A fast client with extremely large latency gets a large chunk to reduce latency overhead.

- Of course, there are problems. Clients may fail, or some few slow clients (or corrupted jobs, „staggers“) could slow down the whole process. To make the system robust, the calculation queue was implemented in a transactional way, and it was logged to which clients calculation jobs were handed over to. Jobs that seemed to take unexpected long calculation time were dispatched several additional times, until one valid result was received.

- Within the clients, jobs were distributed further among the available cores.

- As jobs and results were highly repetitive, of course they were compressed.

This way of distribution was not so bad: 85%-90% of the given calculation time was used, even under bad circumstances: The evolution server only had a standard cable internet connection (still I had to choose it as a server because I could guarantee its reliability).

Swarm Simulator

Like the evolution framework, the swarm simulator was implemented in an abstract way, so in general, lots of experiments could be built on top of it.

- More to the point, I took the physics framework Phys2D as a base (today, I would choose a framework with better maintenance, like for example the related http://www.jbox2d.org/JBox2D). The physics engine makes the swarmer movements look like they were ice skating in the videos

. Despite the maintenance, it worked pretty well.

- Internally, Phys2D uses some nice quad tree collision detection, which I tweaked for better performance. Sensorics could be easily implemented as collisions between „sensor areas“ with „emmitters“. I did not implement any occlusion tests.

Topology Evolvable Neural Net

What remains, is the topology evolvable neural network, the brain of the swarmers. This is the main part of the parameters adjusted by evolution. Net input are all swarmer sensorics, outputs went to the actuators (movement, communication, and so on). Everything in between was evolved.

- To be able to evolve arbitrary topologies (namely add and remove synapses and neurons), the neural network was implemented as adjacency matrix.

- For faster propagation, an additional connectivity cache was implemented.

However, with respect to this, see also the section „lessons learned“ below.

Experiments

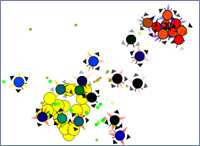

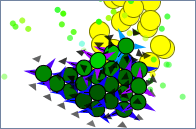

After the implementation period, a family of nature-inspired evolution experiments with different environments and swarmers was performed (10 experiments with 10 evolutions each). Evolved swarms were first tested by an automated testsuite to decide whether detailed analysis and visual observation is applicable. How behavior is elicited using evolutions, is shown in the following Youtube-Video.

Throughout the original work, 10 experiments including 100 evolutions have been performed, and a vast variety of behaviours was elicited. Thus, it is presented, how volatile behavior patterns can be, that result from less constrained evolutions. Elicited patterns were discussed and extensively and presented in text, image and video material.

Given the presented artificial ecology and free evolutions, it seemed remarkably easy to evolve mechanisms that can be seen as cooperation among the swarm. Capabilities were measured to be of significant importances, that make no sense in individual behaviour (such as several communicational capabilities or social interaction forces).

The variety of evolved behaviours includes several behavioural patterns observed in nature, such as

- mutual inhibition of reproduction in order to budget food,

- sophisticated aggregation behaviour,

- marking food sources with pheromones,

- and exploration.

- Forms of communication were evolved, simple, though essential for the swarm.

Behaviours were evolved which can be observed everywhere in nature – however, in a synthetical and, therefore, completely transparent analyzable way. Even though the experimental swarms were assembled using fictional individuals, the results exhibit the vast, inspiring potential of such free experiments in the field of synthetical biology. This potential is also shown throughout the following talk trailer on YouTube.

Some Figures

The evaluations were distributed via TCP/IP and therefore carried out on a variable number of heterogeneous multi-CPU-computers in parallel. During peak periods, about 100 CPUs were used in parallel. We performed 10^{10} simulation steps per experiment (remember: one experiment contained 10 evolution runs), continuously simulating approx. 10 to 50 swarmers and possibly thousands of objects, within 0.5 to 4 days. This represents an effective simulation speed of 50000 to 400000 simulation steps a second with realistic 2d rigid body physics taken into account.

The evaluations were distributed via TCP/IP and therefore carried out on a variable number of heterogeneous multi-CPU-computers in parallel. During peak periods, about 100 CPUs were used in parallel. We performed 10^{10} simulation steps per experiment (remember: one experiment contained 10 evolution runs), continuously simulating approx. 10 to 50 swarmers and possibly thousands of objects, within 0.5 to 4 days. This represents an effective simulation speed of 50000 to 400000 simulation steps a second with realistic 2d rigid body physics taken into account.

Nearly 30.000 lines of code - Lessons learned

- Evolving colorful swarms rocks

- Don't do everything yourself. Next time, on another planet, I would use some evolution framework and cluster parallelization mechanisms maintained by others. Maybe other parts as well. I then could use the time saved to tweak some core data structures and algorithms to be tailored to my special needs. Nevertheless: The journey is the reward! Because of my bullheadedness, I learned a whole lot.

- Algorithmics and data structure analysis are your friends! For instance, a significant part of the calculation effort was caused by the neural networks. This is why after the thesis, I implemented SNIPE, which offers a lot more speed and scalability by design. Funnily, after finishing the core data structures I found out that I really like working on algorithms and tailored data structures and changed fields. Now, I pursue activities in swarm behavior and classical algorithmics.

- The evolution operators for evolving neural nets weren't so sophisticated, today I would take some others.